What Your ChatGPT Error Message Means

In the realm of artificial intelligence, Large Language Models (LLMs) have become revolutionary tools, reshaping the landscape of numerous industries and applications. From writing assistance to customer service, and from medical diagnosis to legal advisory, these models promise unprecedented potential.

Despite their robust capabilities, understanding LLMs and their behavior is not a straightforward process. While they may fail to accomplish a task, this ‘failure’ often hides a more complex scenario. Sometimes, when your LLM (such as the popular ChatGPT) seems to be at a loss, it isn’t because of its inability to perform, but due to other less obvious issues, like a ‘loop’ in the decision tree or a plug-in timeout.

Welcome to the intricate world of prompt engineering, where understanding the language of ‘failures’ and ‘limitations’ can unlock new layers of LLM performance. This blog will guide you through the maze of LLM functionality, focusing on what your ChatGPT is and isn’t telling you when it encounters a problem. So, let’s decode the silence of our LLMs and uncover the hidden narratives behind their ‘unexpected behavior’.

Breaking Down Large Language Models: Functionality and Limitations

Imagine a labyrinth of possibilities, where every new sentence, every fresh piece of information, leads you down a different path. This is, in essence, the decision-making landscape of an LLM like ChatGPT. Each prompt given to an LLM is like the entrance to a new maze, with the model’s objective being to navigate this maze and find the most relevant, coherent, and accurate response.

How does it accomplish this? To understand that, we first need to comprehend the key components of LLMs. These models are built on a framework known as the Transformer, a deep learning model that uses a technique called Attention to focus on different parts of the input when generating the output. It’s akin to a highly skilled multitasker who can prioritize and divide attention across various tasks based on their importance.

However, even the best multitasker can run into hurdles. In the case of LLMs, these hurdles often manifest as situations where the model finds itself in a decision-making loop from which it can’t escape. It’s like being stuck in a revolving door, moving around in circles without making any progress.

A loop doesn’t necessarily mean the model is incapable of performing the task at hand. Instead, it can be a sign of model optimization issues, where the vast decision tree of the LLM needs further finetuning to avoid such loops.

As we delve further into the behavior of LLMs, it’s crucial to remember that a failure or limitation signaled by your LLM might not always be what it seems.

Let’s explore this in more detail, bringing a new perspective to understanding and improving the performance issues of LLMs. The true strength of these models lies not just in their ability to generate human-like text, but also in the potential for enhanced decision making and adaptation when faced with problems. And to unlock this potential, we need to listen to what the LLM isn’t saying, as much as what it is.

Understanding and Overcoming Those Error Messages

The world of large language models, like many fields of advanced technology, has its own unique language. As users or developers of LLMs, understanding this language can make the difference between effective problem-solving and constant frustration. An integral part of this language is error messages.

When an LLM like ChatGPT encounters a problem and fails to execute a task as expected, it doesn’t typically communicate its struggle with words of defeat, but rather through error messages. These messages can often signal the presence of an internal technical issue that is causing an impediment rather than indicating a limitation of the model itself.

As we mentioned, this could be a result of the model getting caught in a loop during its decision-making process decision tree, causing it to either repeat certain steps or halt altogether. This doesn’t mean that the model is incapable of completing the task, but rather that it has encountered a problem in its algorithm that needs to be addressed.

Similarly, a plug-in timeout can happen when a specific plug-in, which is an additional software component that extends the capabilities of the main software, takes too long to execute a task. Many LLMs weren’t originally designed for the fast-paced environment of web-based applications and might struggle to keep up with the demanding speed requirements, leading to plug-in timeouts. Again, this doesn’t reflect the model’s inability to execute the task, but indicates a compatibility or speed issue that needs troubleshooting.

In both these examples, the error message is not a dead-end, but a signal indicating the need for model optimization, performance enhancements, or refinements in prompt engineering. Interpreting these ‘error messages’ correctly is critical to improving the system’s performance and reliability. It transforms the process from a seemingly failed attempt into an opportunity for refinement and growth.

While encountering error messages may seem like stumbling blocks, they are really stepping stones towards a better, more efficient Large Language Model. Interpreting these messages and understanding what they truly indicate is the first step. The next step involves strategies to overcome these issues and optimize the performance of the model.

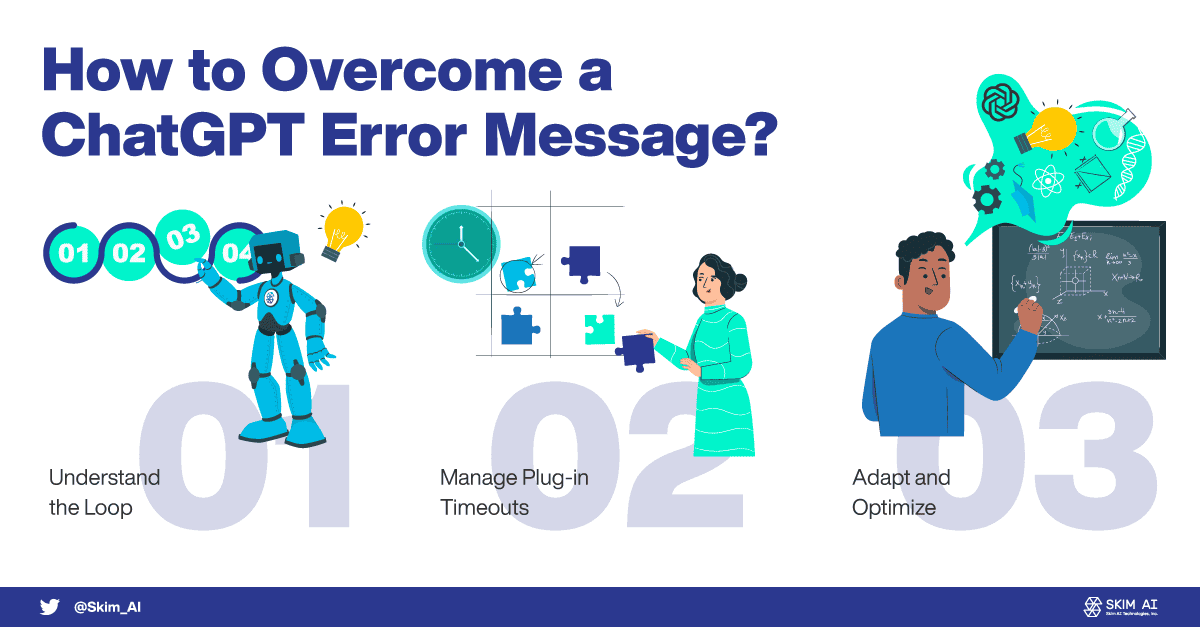

Understanding the Loop: The key to managing a loop situation is to understand the nature of the decision-making process in LLMs. When the model gets stuck in a loop, we can tweak the prompt or adjust the underlying algorithm to help it navigate out of the loop and continue its task. Understanding how the LLM makes decisions equips us with the necessary tools to guide the model and break it free from any decision-making loops.

Managing Plug-in Timeouts: These are often related to the compatibility of the model with high-speed, web-based environments. Adjusting the model’s speed, refining the plug-in’s performance, or optimizing the web compatibility of the model can alleviate such issues. A key strategy here is to constantly monitor and fine-tune the performance of plug-ins to ensure they match the fast-paced requirements of the web.

Adapting and Optimizing: An important part of overcoming these error messages is the willingness to continuously adapt and optimize the model. This could mean revising the model’s parameters, refining the prompt engineering process, or even improving the model’s decision-making capabilities. It’s a continuous process of learning, adapting, and refining.

By employing these strategies, we can transform error messages from perceived ‘failures’ into opportunities for enhancements, leading to a more reliable and efficient Large Language Model.

Real-life Examples and Solutions

Let’s delve into some real-life scenarios that you might encounter and how to overcome them:

The Case of the Never-ending Story

Consider an instance where an LLM, like ChatGPT, is being used for automated story generation. The task is to generate a short story based on a user-inputted prompt. However, the model gets stuck in a loop, continuously generating more and more content without reaching a conclusion. It appears to be a ‘failure’ as the model is not able to deliver a concise story as expected.

- The true issue: The model has gotten stuck in its decision-making loop, continuously extending the story instead of wrapping it up.

- The solution: A small tweak in the prompt or a subtle adjustment in the model’s parameters could steer the model out of the loop, enabling it to complete the task successfully.

The Sluggish Web Assistant

Suppose an LLM is deployed as a virtual assistant on a web platform. It is supposed to respond to user queries in real time. However, sometimes the model’s responses lag, and at times, it fails to respond at all.

- The apparent problem: The model seems to be incompatible with the real-time, high-speed requirements of a web platform.

- The real issue: Plug-in timeout. The LLM’s plug-in isn’t keeping up with the fast-paced web environment.

- The solution: Optimizing the model’s speed, refining the performance of the plug-in, or improving the web compatibility of the model can alleviate this issue. It’s all about continuously monitoring and fine-tuning to match the performance demands of the web.

The Misleading Translator

An LLM is tasked with language translation. Occasionally, it returns an error message indicating that it is unable to perform the translation.

- The perceived failure: The model seems incapable of translating certain phrases or sentences.

- The actual issue: The LLM might be running into unexpected behavior due to complexities in the input text or subtleties in the languages involved.

- The solution: A careful evaluation of the input text and prompt, possibly followed by some refinement in the model’s parameters or the translation prompt, can often help the model overcome such challenges.

These examples underline the theme that ‘failures’ in LLMs are often not a sign of the model’s incapability, but rather indications of areas where further optimization or adaptation is required. With a deeper understanding of what the LLM isn’t saying, we can turn these ‘failures’ into opportunities for improvement and enhancement.

Deciphering LLM’s Silent Messages

In this digital era, as we continually integrate large language models into our daily lives, it is crucial to recognize their extraordinary capabilities while understanding their limitations and the unique challenges they face.

When an LLM encounters a problem, it isn’t necessarily a ‘failure’ in the conventional sense. Instead, it’s often a silent signal – an unspoken word – pointing towards a specific issue like a decision loop, a plug-in problem, or unexpected behavior that has interfered with the model’s task.

Understanding these silent messages from the LLM can allow us to adapt, optimize, and improve its performance. Therefore, the key lies not in focusing on the error message alone, but in unraveling the deeper, often hidden, meanings behind these messages.

As we move forward, it’s essential that we continue to advance our understanding of LLMs and cultivate our ability to decipher what these intelligent models aren’t saying. After all, it is this understanding and our ability to respond to these unspoken words that will truly enable us to unlock the full potential of these incredible AI tools.