What is an Adversarial AI Attack?

What is an Adversarial AI Attack?

Deep learning technologies like Artificial Intelligence (AI) and machine learning (ML) present a range of business benefits to enterprises. At the same time, an adversarial AI attack is a growing security challenge for modern systems.

What is an Adversarial AI attack? A form of cyberattack that “fools” AI models with deceptive data. Also known as Adversarial ML, this form of attack has largely been reported in image classification and spam detection.

Next, let’s discuss the various risks posed by an Adversarial AI attack.

Risks posed by an Adversarial AI attack

According to Alexey Rubtsov of the Global Risk Institute, “adversarial machine learning exploits vulnerabilities and specificities of ML models". For example, adversarial ML can cause an ML-based trading algorithm to make incorrect decisions about trading.

How does an Adversarial AI attack pose a risk to AI and ML systems? Here are some common findings:

Social media engineering: In this case, the AI attack “scrapes” across user profiles in social media platforms using automation. It then generates content automatically to “lure” the intended profile.

Deepfakes: Typically used in banking frauds, deepfakes are online threat actors that can achieve a range of nefarious objectives including:

Blackmail and extortion

Damaging an individual’s credibility and image

Document fraud

Social media for individual or group harassment

Malware hiding: Here, threat actors leverage ML to hide the malware within “malicious” traffic that looks normal to regular users.

Passwords: Adversarial AI attacks can even analyze a large set of passwords and generate variations. In other words, these attacks make the process of cracking passwords more "scientific". Apart from passwords, ML enables threat actors to solve CAPTCHAs too.

Examples of Adversarial AI Attacks

By using Adversarial AI examples, hackers can simply design an attack to cause the AI model to make a mistake. For instance, a corrupted version of a valid model input.

Here are some popular examples of methods used in Adversarial AI attacks:

FastGradient Sign method (FGSM): This is a gradient-based method that can generate Adversarial AI examples to cause the misclassification of images. It does this by adding a minimum amount of perturbation to the image pixel.

Limited-memory Broyden-Fletcher-Goldfarb-Shanno (L-BFGS): This method is another non-linear gradient-based numerical optimization algorithm that can minimize the number of image perturbations. This method is extremely effective at generating Adversarial AI examples.

Deepfool attack: This is another method highly effective at producing Adversarial AI attacks with a high misclassification rate and fewer perturbations. This form of attack uses an untargeted adversarial sample generation technique to reduce the Euclidean distance between original and perturbed samples.

Jacobian-based Saliency Map Attack (JSMA): In this form of adversarial AI attack, hackers use feature selection to minimize the number of modified features, thus causing misclassification. Flat perturbations are iteratively added to the features based on the saliency value on decreasing order.

These are just some examples of Adversarial AI. Next, let’s discuss how to defend against Adversarial AI attacks.

How to defend against Adversarial AI attacks

221122 skim ai infographic 2 png 01

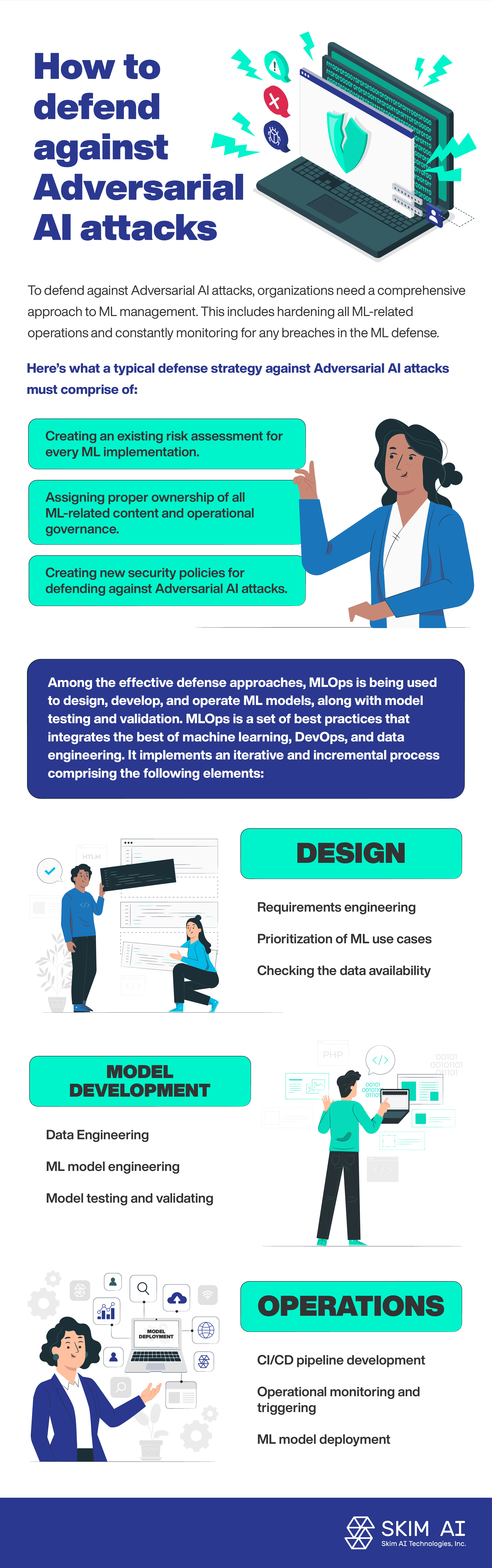

To defend against Adversarial AI attacks, organizations need a comprehensive approach to ML management. This includes hardening all ML-related operations and constantly monitoring for any breaches in the ML defense.

Here’s what a typical defense strategy against Adversarial AI attacks must comprise of:

Creating an existing risk assessment for every ML implementation.

Assigning proper ownership of all ML-related content and operational governance.

Creating new security policies for defending against Adversarial AI attacks.

Among the effective defense approaches, MLOps is being used to design, develop, and operate ML models, along with model testing and validation. MLOps is a set of best practices that integrates the best of machine learning, DevOps, and data engineering. It implements an iterative and incremental process comprising the following elements:

Design:

Requirements engineering

Prioritization of ML use cases

Checking the data availability

Model development:

Data Engineering

ML model engineering

Model testing and validating

Operations:

ML model deployment

CI/CD pipeline development

Operational monitoring and triggering

Conclusion

According to the U.S. National Security Commission report on Artificial Intelligence, a low percentage of AI research funds are being invested in defending AI systems from adversarial attacks. The increasing adoption of AI and machine learning technologies is correlating with the increase in Adversarial AI attacks. Effectively, AI and ML systems pose a new attack surface, thus increasing security-related risks.